LinuxForce’s web hosting services are designed to provide our customers with the benefits of strong security, simple on-going administration and maintenance, and support for the web software to work well in a large number of situations including multi-site support for applications.

LinuxForce’s web hosting services are designed to provide our customers with the benefits of strong security, simple on-going administration and maintenance, and support for the web software to work well in a large number of situations including multi-site support for applications.

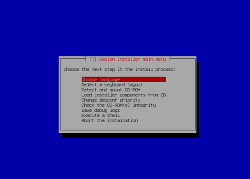

We achieve these objectives by operating a Debian infrastructure with close adherence to Debian’s policy, web application policy and PHP policy.

In particular, we use the Debian package for Drupal 6. This provides community supported upgrades with a strong, well-documented policy. Like other software which ships in Debian, the software version is often somewhat older than the most recent upstream release, but it is regularly patched for security by the maintainer and the Debian security team.

In addition, this infrastructure also offers:

- Only one package needs to be upgraded whenever there is a security patch, instead of every site individually

- Strong separation from site specific themes, modules, libraries, uploaded files helps keep files you want to edit away from the Drupal core files (which you don’t want to edit)

- The automation the infrastructure provides disk, memory, and sysadmin time to be minimized, thus reducing costs

- The benefits of code maturity, as the Debian Drupal maintainers have thought through many boundary cases which it would take our staff time and trial-and-error to re-discover

Site layout

Experienced Drupal admins may find some of the file locations for the package confusing at first, so let’s clarify the differences to ease the transition.

Our infrastructure supports multiple sites per server. This is implemented by providing each site with their own dbconfig.php and settings.php files, plus the following directories:

- files/

- themes/

- modules/

- libraries/

All of these are configurable by the user, and are located in /etc/drupal/6/sites/drupal.example.com/. The site also inherits the contents of these default directories from core Drupal.

Access to these files via FTP is discussed below.

Core Drupal files are located in /usr/share/drupal6/ and are shared between all the Drupal sites on the server. They should not be edited since all changes to these files will be lost upon upgrade of the Debian Drupal package.

Users & Permissions

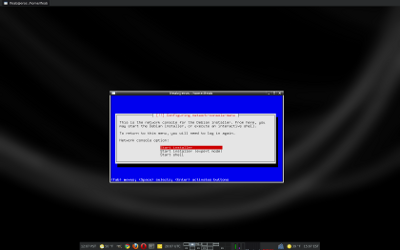

A jailed userdrupal FTP account is created to manage the files for the Drupal install.

The userdrupal account is the owner of all the configurable files located in /etc/drupal/6/sites/drupal.example.com/

The system-wide www-data user must have access to the following by having the www-data group own them and be given the appropriate permissions:

- Read access to dbconfig.php

- Write access to the files/ directory (this is where Drupal typically stores uploaded files)

All files (with the exception of dbconfig.php) should be writable by userdrupal, the userdrupal group itself is enforced by the system but all users should be configured client-side to respect group read-write permissions to maintain strict security, this is a umask of 002.

Additional Users and non-Drupal Directories

If there is a non-developer who needs to have access to the files directory, for instance, a specific FTP user for that use may be created and added to the userdrupal group.

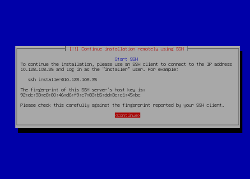

For ease of administration, if multiple users exist and we are able to support an ssh account (static IP from client required) for handling administration, sudo can be configured to allow said user to execute commands on behalf of the userdrupal ftp user. Remember to add “umask 002” to the .bashrc to respect group read-write permissions.

If additional directories outside the Drupal infrastructure are required for a site, they will be placed in /srv/www/example.com/ and a separate jailed ftp user created to manage the files here. If a cgi-bin is required, it will be placed in /srv/www/example.com/cgi-bin/

Caveats and Cautions

Because of shared use for core Drupal files located in /usr/share/drupal6/ you may experience problems with the following:

- Some drush commands and plugins assume the drupal files are editable files within the same document root as the rest of your files, thus may not work as expected

- When you upgrade the drupal6 package all sites are upgraded at once without testing, customers who are concerned about the impact of the upgrade changes and require very high uptimes can be accommodated by testing the site with the upgraded versions of PHP and Drupal in a testing VM

- Since the Debian package name does not change, you cannot install the drupal6 package from an older Debian version alongside one from a current version, a separate virtualized Debian environment may be needed for testing upgrades if support is uncertain (this issue does not exist with upgrades from drupal6 to drupal7, as they can be installed alongside each other)

- A policy for handling root level .htaccess files should be developed if they wish to be used

Conclusion

Although the Debian way differs from the Drupal tarball approach, it makes it possible to scale the service to many sites saving disk, memory, and sysadmin effort. By leveraging this Drupal infrastructure provided by Debian, Linuxforce provides one-off Debian package deployments to dedicated systems, shared arrangements for small businesses who are running several sites, and infrastructure deployments for businesses who provide hosting services. We also offer a boutique hosting service for select customers on one of our systems.